As part of my role in the Academic Applications team I get the opportunity to lead the software architecture utilizing many of Quanser’s systems. This year we expanded the work we did to fully support ROS as the main framework for autonomous systems. The development process was also combined with Quanser’s participation in the American Controls Conference occurring in Denver, Colorado. If you have not had the chance to attend technical conferences, now is your chance! I have always enjoyed and been grateful of being an exhibitor when the opportunity presents itself. As an R&D engineer I get to have very insightful discussions with researchers on how Quanser’s systems can help them advance their work.

This year we also expanded the self-driving competition to emulate an Uber like system where each vehicle has to navigate to a taxi hub and complete a series of rides as part of the competition. This presented me with the opportunity to demonstrate an alternative approach of using ROS to solve this problem. This solution is based on Quanser’s QCar 2 which combines the high end computing power of NVIDIA’s SOM with a series of sensors for a complete test bench ready for autonomous system validation.

Validation with a virtual platform

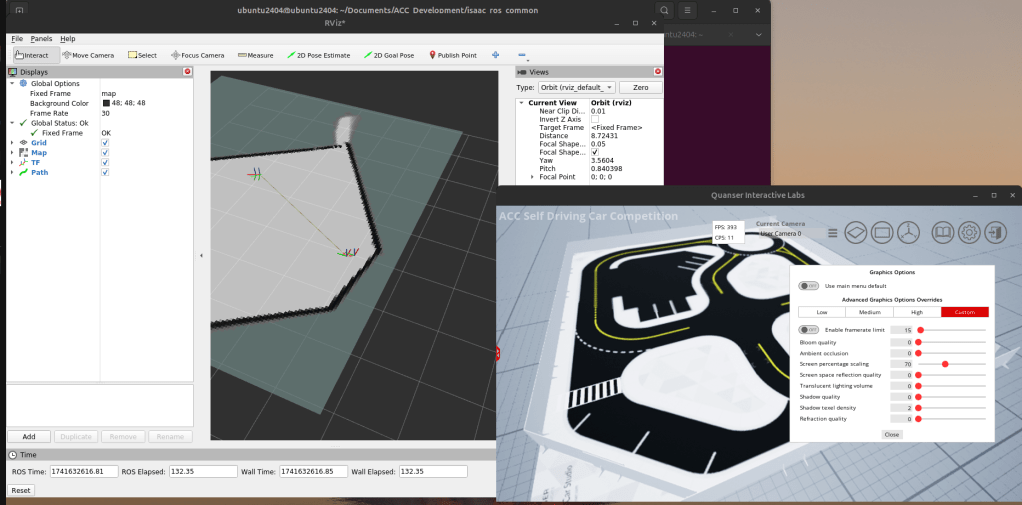

Quanser has a high fidelity simulation for validating and testing self-driving algorithms. Using this as a starting point I began using ROS 2 Humble to create the first iteration of this self-driving stack. ROS 2 utilizes the cartography package to generate occupancy grids of the world and estimate the approximate location of an agent in space. Using this as a starting basis we develop one component of a self-driving vehicle, the localization suite. The intermediate result shows a simulation window like this:

Figure 1: Cartographer developing an occupancy grid for vehicle localization

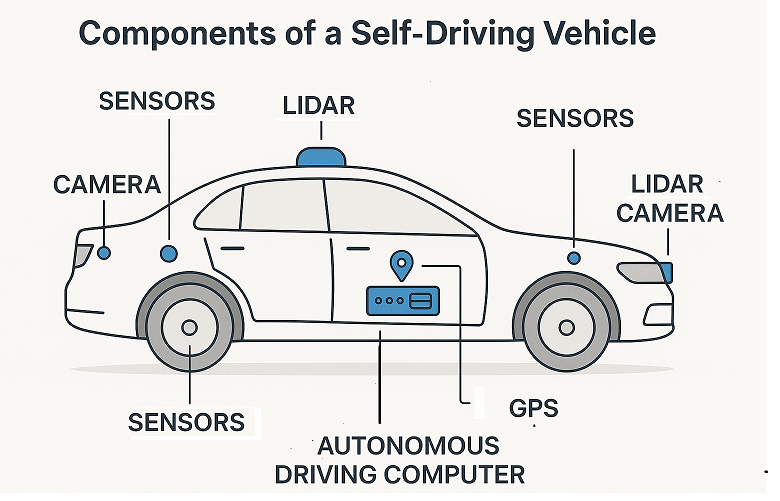

As a reminder, for a vehicle to perform self-driving tasks it requires a combination of the following components:

Some of the sensor components can be encoders for wheel velocity estimation, IMUs to determine body acceleration/roll, or an array of cameras like Tesla vehicles to sense the world around the vehicle.

My job was to architect every subcomponent to ensure safety and efficient real-time performance. The second portion of the competition required combining the work done on the virtual simulation and generate an actual working autonomy stack which was able to detect and react to signs and objects in the scene. This meant developing a behaviour stack which would modify the actions taken by the vehicle in order to safely react to road signage. As a result a classical computer vision approach was developed and tested in virtual to validate real-time performance.

Figure 2: Traffic detection system in action

A node graph representation of the world generated a smooth path for the vehicle to follow as it receives a starting and final goal location. This is very similar to what google maps gives you when you decide to travel across the city. In the simulation above the smooth path given by the green path is a result of the global path planner. A local planner and waypoint controller enabled traversal across the map.

Virtual to Physical

As roboticist we all know there is always a simulation to real gap. This is where the next stage of the validation came in. When validating the simulation in virtual the QCar was limited to slow movements due to limitations with the simulation engine. Having the physical system enabled me to incorporate more complex state estimation techniques and increase the speed of the vehicle as it traversed the world. The first step was to generate smooth state estimate values, to do this an Extended Kalman Filter was implemented to improve the pose estimate from the cartography system.

Likewise we needed to validate if the classical computer vision system would perform well with different lighting conditions. This is a short example of the QCar detecting the stop sign and waiting before continuing the actual run.

Figure 3: Traffic detection system in action with physical system

The last component in this system was to develop a trip planning behaviour system. Until this point this application was able to develop a path to travel from point A to point B. As part of this challenge the ask was to complete a trip which was comprised of a starting location, a series of stop points, a drop of an a return to a taxi hub. This meant a completely new behaviour system in charge of requesting information from the user an creating a the necessary calls to the rest of the autonomy stack so that the QCar could complete the desired task.

The end result became a fully enclosed trip planning system like the robotaxi. This system combines ROS for localization and a series of control and behaviour planning systems to control the actions of the QCar as it drove around the space. In this video demonstration we see the QCar at the start of the pick up location and traverse to a drop off before heading towards the taxi hub area. The full trip is planned autonomously and the QCar just needs to be started at a known reference frame.

Figure 3: Autonomy Demonstration in action

This application example demonstrates a proof of concept for a fully fleshed out autonomous system expanded to production.

Leave a comment